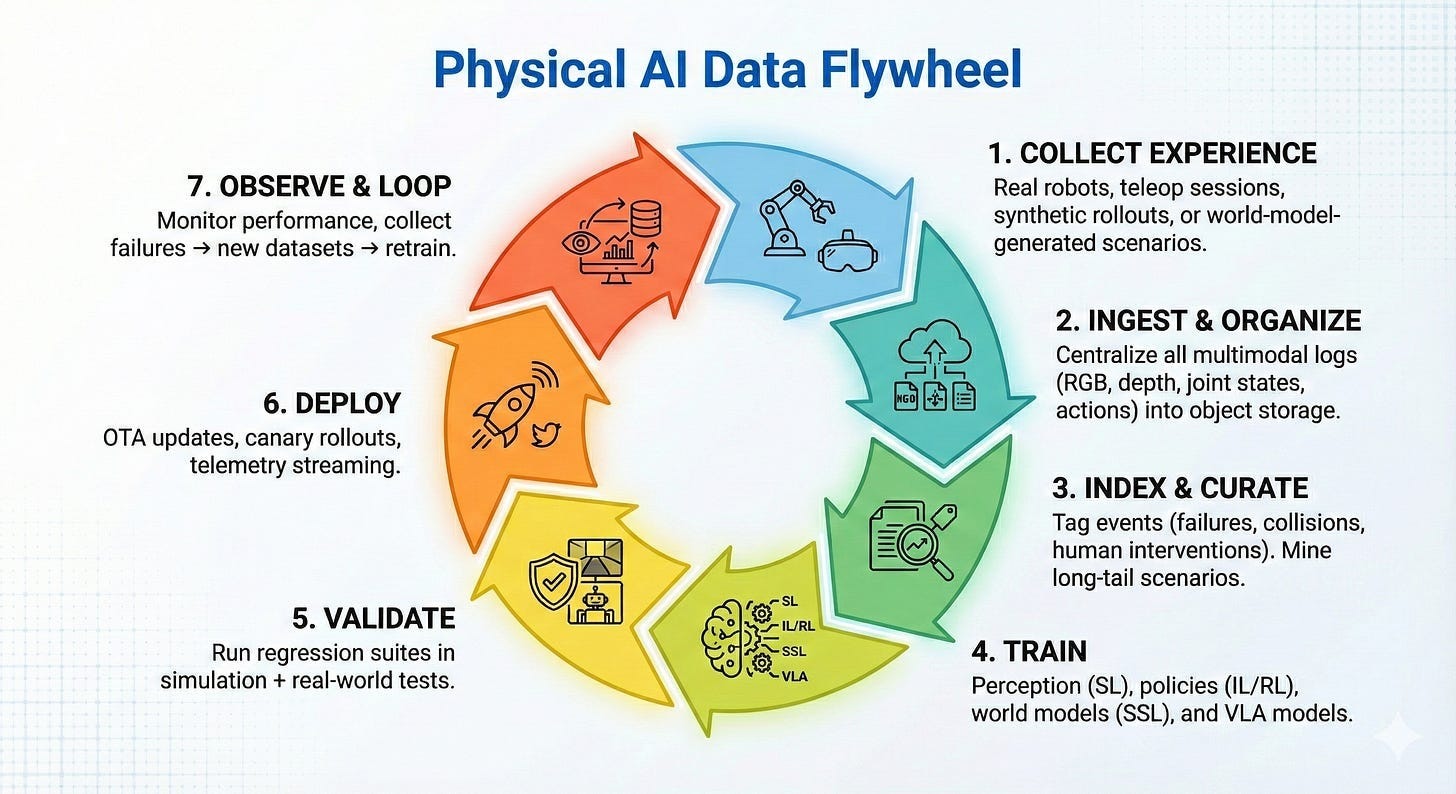

My last essay contrasted MLOps with ‘RobotOps’: the discipline required to break intelligence out of tiny screens and into the physical world.

It also followed a day-in-the-life of a robotics developer (named Maya) and a stressful one at that; fumbling and bumbling her way through the lifecycle of robotics data, i.e. a physical AI ‘data flywheel’.

Today, we’re blasting Maya into the future.

This is what it feels like when the data flywheel runs itself.

Enjoy.

PS: When I first wrote this, it was before the release of agentic tools like OpenClaw. Suddenly, this stuff is not nearly as sci-fi as you might think. Much of ‘2033’ will likely be pulled into 2028-29, if not sooner.

Robots-as-a-Service

Maya was an entrepreneur and the kind the world needed to see.

She set the standard for what’s possible in the age of AI. Rather, the age of embodied-AI. The age of near-infinite leverage.

Turns out, companies didn’t make huge headcount cuts. They empowered existing headcount to do more. Much, much more. This IKEA example from 2026 set the stage.

Entrepreneurs did the same, and in robotics, Maya became the shining example.

A roboticist by trade, an agentic tinkerer by night, Maya caught one of AI’s largest waves: owning and managing her own fleet of robots for industrial tasks. Similar to the trucking or large equipment rental business.

Of course, most Fortune 500’s owned their own fleets, with the in-house capability to post-train, deploy, and orchestrate across their infrastructure (factories, warehouses, refineries, you name it).

But the SMB market? A much different story. They needed to outsource and Maya was primed for the opportunity. Texas was a honey pot of small manufacturers and logistics firms. She seized it.

But Maya was also a mom and the kind who refused to succumb to the status quo of choosing between family or work.

With infinite leverage machines, she could do both. Here’s how.

8am: Observe & Collect Experience

Maya’s day doesn’t start with code. And it certainly doesn’t involve alarming Slack messages from her client’s warehouse team.

It starts with the warm embrace of her daughter and giggling laughter in bed.

It then follows with a friend; as Maya steps into her office, a cute little droid rolls in behind her, hand delivering a fresh and foamy latte.

She takes a sip and slips on her glasses. A small display lights up inside the lenses.

“Thanks Bill. Can you show me last nights report?”

Maya’s AR glasses come to life, displaying an immersive array of insights.

It’s not rows of raw robot logs and file names. It’s a clean list of actual events; various failures, anomalies, and edge cases, all detected automatically; a stumble here, a dropped package there, and a surprising number of wrong turns.

Her main KPI in the top right corner is yellow.

The average package transport time has dipped a few seconds. Not good, especially with the holidays right around the corner.

“Bill, show me the failure events with the longest time to recovery and then replay the top three scenes.”

A timestamp floats out of the report: 2:17am Event: Package dropped. Recovery time: 14.3 seconds. Type: Rare and emerging.

Immediate context, on demand.

“Play event.”

Her glasses switch into VR mode. Suddenly, Maya is the robot, reliving its experience.

As expected, each scene showed the same thing; a stack of boxes that were left in the rain, all slippery as can be.

The good old fashion sim-2-real gap strikes again.

9:30am: Understand the Gaps

“Bill, what are you seeing here? Where is the model falling short?”

The scene collapses and Maya is back in her office. Three holographic panels appear, each one replaying a different version of the failure.

“I’ve clustered the incidents,” he says, his voice chirpy and calm. “Eighty-two percent correlate with low-friction surface conditions after rainfall. This scenario is underrepresented in both your real-world dataset and simulation corpus.”

A 3D heatmap blooms into her field of view. It’s not just a spatial dashboard. It’s a diagnosis.

Red islands mark blind spots in the model’s experience. Yellow gradients show where performance degrades gracefully. Green shows confidence.

“So what changed?” Maya asks.

“Humidity,” Bill replies. “The model has insufficient exposure to wet-surface interactions at this humidity level, with these payload weights. And looking ahead... These conditions are going to persist on and off for the next week.”

Maya sat back and smiled.

She remembered the days of manually searching a data lake, knee-deep in SQL queries and half-broken scripts, trying to guess what the robot hadn’t seen enough of.

Now for the fun part…

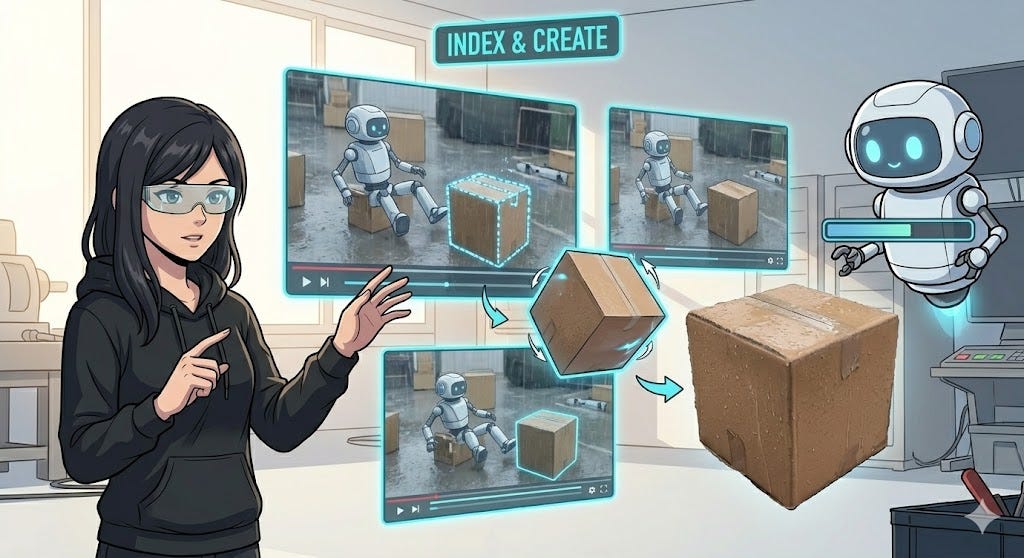

10am: Creating the Right Experience

“Okay Bill, let’s fix this. Do we have the right 3D assets and physics parameters in our sim?”

A rotating saucer appears.

Numerous 3D models of cardboard boxes are displayed on top, each slightly soggy and deformed. Quite the desert plate.

“Here are the current assets in our library,” Bill says

Something is off. The boxes in the videos were a different shape, size, and color.

“Ah, forgot we got a new box vendor! Bill, extract images of the boxes and let’s GSplat ‘em”

“On it.”

Maya looks over to the video panels. A shimmering line traces the new boxes and pops them out like a cookie cutter. The images blur, spin, and voila: what was once a 2D image is now a perfect 3D model, with the exact same texture and lighting as the boxes in the video.

“Great. Now let’s optimize the physics engine and create the scene,” Maya commands.

“A step ahead of you. Here’s the plan.”

The 3D models and videos disappear. In their place, a workflow diagram, showing all the detected gaps and steps Bill took to fill them; new box material with new friction coefficients, new payload mass, new actuator latency to match the warm, damp motors.

“Great. Now randomize the domains across 10,000 different variations.”

A new report surfaces. Each scenario is parameterized, ranked, and tagged with an estimated learning yield.

These aren’t one-off virtual worlds anymore. They’re flexible experiences the system can probe and stretch. Now, the digital robot doesn’t just repeat the same failure; it explores all the variations around it.

In the past, Maya had to sit with a team of 3D developers, hand-authoring edge cases based on intuition and experience, hoping she’d imagined the right failures.

Now, simulation isn’t driven by imagination. It’s driven by evidence.

“Run the top tier of scenarios,” she says. “Stop when marginal gains flatten.”

“Already planned,” Bill replies. “Simulation will terminate once gradient contribution drops below threshold. Should take 127 minutes”

The parallelized simulations spin up automatically, along with all of the supporting cloud infrastructure; not as a bespoke experiment, but as a service the system knows how to use.

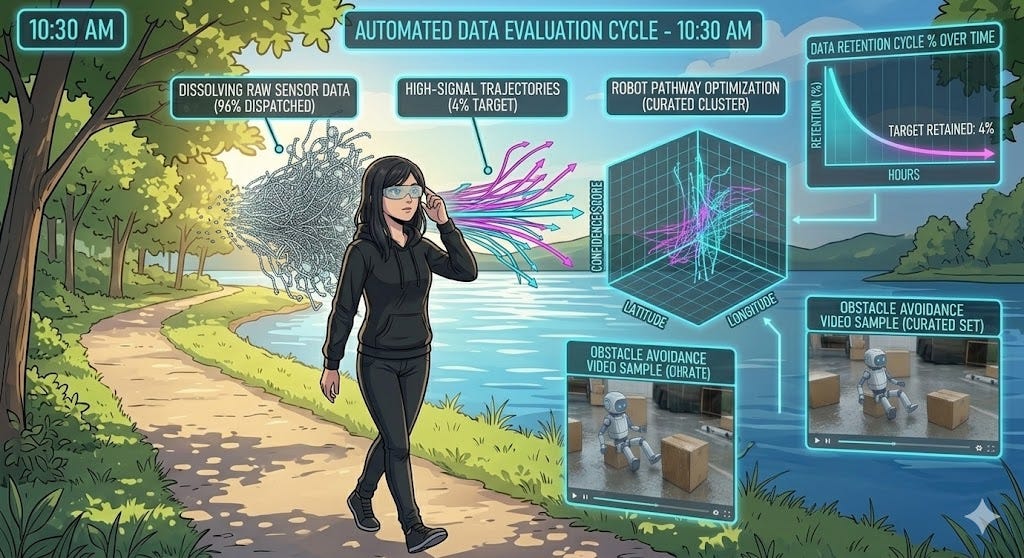

10:30am: Data Evaluation

Maya steps out for a walk with her husband; a refreshing stroll along the lake near their house. They grab a lite bite and toss bread to a family of waddling ducks.

As they wrap and head home, her device buzzes. She throws on her glasses.

“Hi Maya, beautiful day isn’t it? A quick update: the simulations and data parsing are complete.”

A 3D graph materializes on her horizon. Thousands of simulated trajectories appear, and just as quickly, most of them vanish.

Maya watches a thin curve chart downward as the system discards the majority of simulation runs. Only four percent survive.

“Redundant trajectories removed,” Bill narrates. “Retaining only samples that expand the AI model policy boundary or introduce novel recovery behavior.”

Each clip of the simulations has a short annotation. Things like ‘boundary condition’, ‘compounding failure’, ‘rare recovery’.

In the old world, Maya had to hoard all synthetic data because curation was painfully manual. Now, the curation is automatic and ruthless.

For the first time in her career, she has less data and more confidence.

She also has more time. When she gets home, she takes her daughter to music class and joins in on the musical fray, reliving her first-grade glory days on the recorder.

1pm: Time to Learn

When Maya gets home, she sifts through the curated data samples and summons Bill.

“Good stuff Bill. The data looks good. Let’s update the model.”

A soft confirmation pulse appears in her view.

“Training underway,” Bill says. “I’m blending last night’s real-world success data with the highest-signal simulation runs. I’ll focus the learning on low-friction recovery and weight transfer. Estimated time: 92 minutes”

Real-world data teaches the system what truth looks like. The simulation gives it the reps.

In the old days, this part always felt like a gamble. One bad assumption, one misaligned dataset, and the whole run was toast; discovered only after burning a night of (very expensive) compute.

Worse, real and synthetic data never played well together. They lived in different systems, followed different rules, and told slightly different stories about the same moment.

One came from a messy world full of quirks. The other from a clean digital one that always thought it was right.

Getting them to agree used to take weeks of human glue work. Now, the system treats both as the same thing: pure experience.

Maya leans back as learning continues quietly in the background.

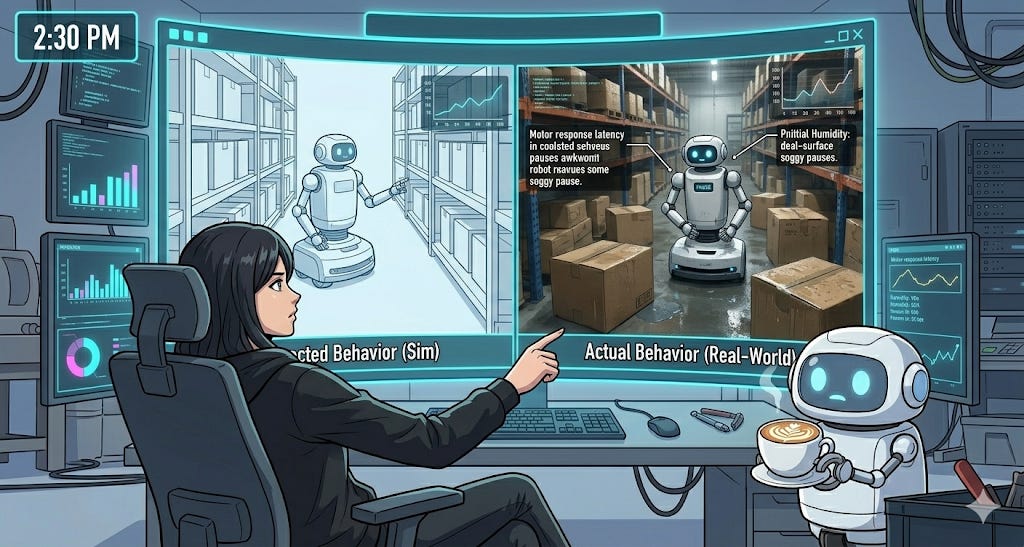

2:30pm: Reality as the Judge

“Maya, training is complete. I’ve deployed a single canary back into the wet environment for validation. Do you want to observe?”

“Yep, give me a side by side”.

Two scenes appear.

On the left, a replay of the simulation: what the system expected the robot to do.

On the right, the real world; what actually happened in the warehouse.

The real robot stopped and stuttered for a split second. Not a huge deal. But could compound over time.

“Why the pause?” Maya asks.

“Motor response latency,” Bill replies. “Today’s humidity is higher than last night, reducing latency in the actuators by about 8%. I’ll adjust the simulation parameters and do one more quick roll out.”

Maya smiled. This is her favorite part.

Simulation is no longer guessing reality. It’s mirroring it.

3:00pm: Quiet Deployment

“The second training run is complete. Rolling out a new model to the first 10 units.”

“Thanks Bill, you’re a gem.”

Maya watches as the first squad of robots revisit the scene of the crime.

“Early indicators are positive. Recovery times are improving. No regressions detected,” Bill says.

Maya now watches the average delivery time KPI. It slowly drifts from yellow to green.

Success. No emergency calls. No one even notices.

3:30pm: Freedom

Maya’s days no longer drag on into the wee hours of the night. Her mission is complete and she can go enjoy the afternoon with family.

As she packs up, Bill chirps.

“Maya, one more thing.”

She pauses. “Whatya got?”

“There’s a cold front coming in three weeks,” Bill says. “Morning temperatures will slow actuator response.”

Maya sighs. “Of course.”

“I’ve already pulled real world data from our Canadian fleet,” he continues. “They saw this exact pattern last month. Cold starts, slight delays. Nothing dramatic, but enough to matter.”

A simple flow appears in her view: real footage from snowy warehouses feeding directly into new practice scenarios.

“Simulations will run overnight,” Bill says. “By the time the cold arrives here, the model will be ready.”

Maya smiles.

When she started this job, surprises like this meant late nights and rushed fixes. Now, the system learns ahead of time.

She heads for the door. Outside, it’s still warm. Inside the data flywheel, winter has already passed.

Hope you enjoyed this glimpse into the future.

Our next essay will break down which parts of this vision are real today, and what will be required to bring it all to life tomorrow.

Until then, subscribe below and stay tuned!